About

📋 Biography

Sandeep Madireddy is a Computer Scientist in the Mathematics and Computer Science Division at Argonne National Laboratory. His research spans probabilistic machine learning, bio-inspired and energy-efficient learning, high-performance computing, and generative AI with an emphasis on safety and robustness. His work integrates algorithmic advances with applied research across fusion energy sciences, cosmology and high-energy physics, weather and climate, and material science.

He previously served as a postdoc and assistant computer scientist in Mathematics and Computer Science Division, advised by Prasanna Balaprakash and Stefan Wild. Before joining Argonne, he earned his Ph.D. in mechanical and materials engineering (probabilistic machine learning) from the University of Cincinnati as part of the UC Simulation Center (a Procter & Gamble collaboration). He previously earned his master's at Utah State University and his bachelor's from BITS Pilani, India.

🔬 Projects & Grants

Sandeep serves as a Co-investigator (and AI lead) for DOE and NSF-funded projects:

- ModCon/Genesis Mission, Lead for Multimodal Scientific AI Reasoning Models BASE Capability Thrust

- AuroraGPT, Co-lead for Evaluation and AI-Safety Thrust

- RAPIDS3: A SciDAC Institute for Computer Science, Data, and Artificial Intelligence, AI thrust co-lead

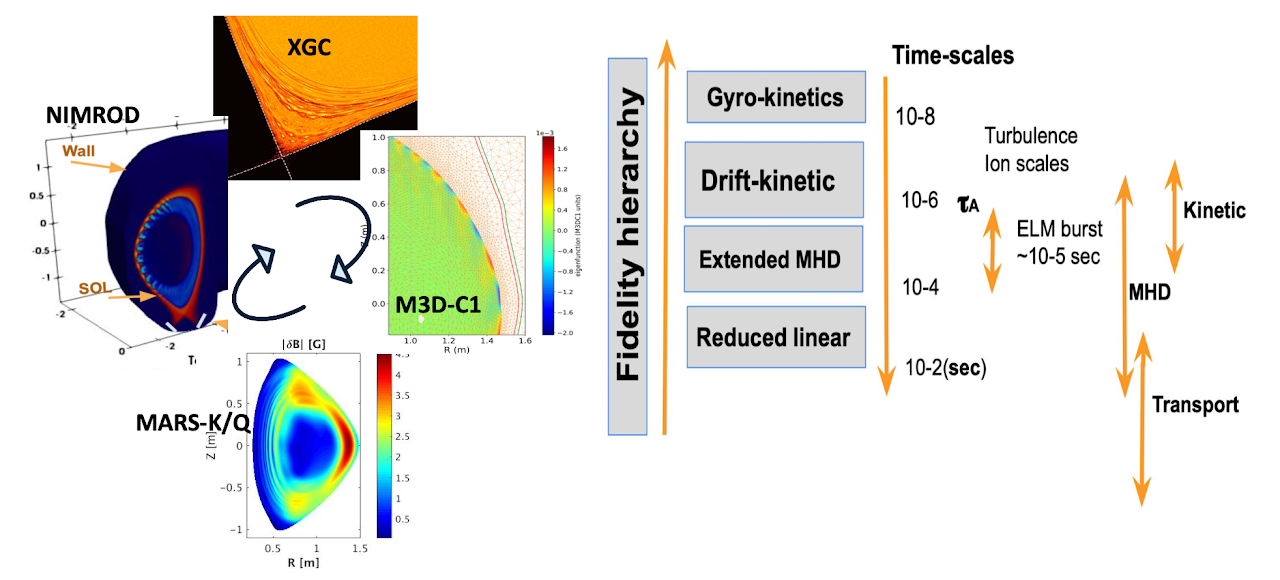

- CETOP: A Center for Edge of Tokamak OPtimization, Co-investigator and AI Lead

📝 Professional Service

Sandeep also provides professional services to machine learning, high-performance computing, and domain-science conferences and journals.

- PC member (HPC): Supercomputing 2023 and 2026, CCGRID 2024, INFOCOMP 2019-2023; reviewer for IPDPS and IEEE Cluster

- Reviewer (AI): ICML (2021-2024), ICLR (2021-2024), NeurIPS (2021-2025), AISTATS (2023, 2025)

- Journals: Nature, Neural Networks, NeuroComputing, JMLMC, SIAM SISC, JPDC, TPDS, Parallel Computing, TCC, JoS, MNRAS, CISE, IEEE TPSC, and JoLT

Research Areas

Probabilistic Machine Learning

Research at the intersection of Bayesian Inference and Information-Theoretic learning.

Scientific Machine Learning and AI for Science

Theoretical and Applied Machine learning for Large-scale Science including Fusion Energy, Astrophysics, HPC, and Climate Science

Multi-modal Foundation Models

Theoretical and Applied research on developing and evaluating (for skill and safety) Multimodal Language Models as scientific research assistants and Spatio-Temporal Foundation models for science.

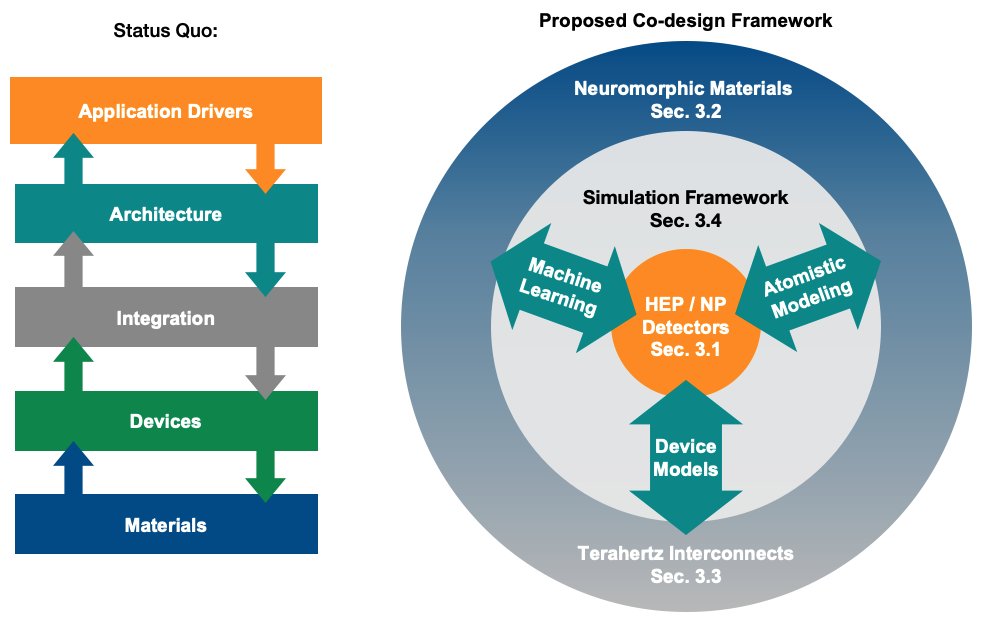

Energy Efficient AI

Energy-efficient AI with Bio-inspired architectures such as Neuromorphics for large scale AI models and life-long learning in this paradigm.

News & Announcements

Senior Personnel (ANL), Lab 25-3560, (Lead PI: Rick Stevens, Argonne National Laboratory), FY26-FY28

Co-PI (ANL), Foundational Models for Energy Applications, LDRD Prime Focus Area, (Lead-PI: Pinaki Pal, Argonne National Laboratory), FY26-FY28

Co-PI (ANL), LAB 25-3510.000002, (Lead PI: Robert Ross, Argonne National Laboratory), FY26-FY31

Co-PI (ANL), Critical Materials and Supply Chains, LDRD Prime Focus Area, (Lead-PI: Barron YoungSmith, Argonne National Laboratory), FY26-FY28

Microelectronics Science Research Center Projects for Energy Efficiency and Extreme Environments (LAB 24-3320), (Lead PI: Valerie Taylor, Argonne National Laboratory), FY25-FY27 https://www.energy.gov/sites/default/files/2024-12/122324-msrc-awards-list.pdf

Publications

2026

KORAL: Knowledge Graph Guided LLM Reasoning for SSD Operational Analysis

PreprintM Akewar, S Madireddy, D Luo, …

arXiv preprint arXiv:2602.10246, 2026

Multi-task Modeling for Engineering Applications with Sparse Data

PreprintY Comlek, RM Krishnan, SK Ravi, …

arXiv preprint arXiv:2601.05910, 2026

This paper presents a Multi-Task Gaussian Processes (MTGP) framework designed for engineering systems with multi-source, multi-fidelity data, addressing issues of data scarcity and varying task correlations. By leveraging inter-task relationships, the framework improves prediction accuracy and reduces computational costs, as demonstrated through benchmarks including the Forrester function, 3D void modeling, and friction-stir welding scenarios.

2025

Aeris: Argonne earth systems model for reliable and skillful predictions

ConferenceV Hatanpää, E Ku, J Stock, …

Proceedings of the International Conference for High Performance Computing …, 2025

This research introduces AERIS, a large-scale pixel-level Swin diffusion transformer, and SWiPe, a technique for efficient parallelism in window-based transformers, to improve weather forecasting with diffusion models. AERIS achieves state-of-the-art performance and scalability on the ERA5 dataset, outperforming existing methods and maintaining stability for seasonal-scale predictions up to 90 days.

Ailuminate: Introducing v1. 0 of the ai risk and reliability benchmark from mlcommons

PreprintS Ghosh, H Frase, A Williams, …

arXiv preprint arXiv:2503.05731, 2025

The paper presents AILuminate v1.0, the first comprehensive industry-standard benchmark designed to evaluate AI systems' resistance to prompts eliciting dangerous, illegal, or undesirable behaviors across 12 hazard categories. This benchmark features a novel evaluation framework, extensive prompt datasets, a five-tier grading scale, and an entropy-based response assessment, supported by infrastructure for ongoing development and cross-disciplinary input.

AuroraGPT Data Collection Interface

ArticleR Underwood, A Maurya, Z Li, …

Argonne National Laboratory (ANL), Argonne, IL (United States), 2025

The software package enables efficient data collection to evaluate the performance of large language models (LLMs) on scientific topics. It is designed to support comprehensive assessment, ensuring accurate measurement of LLM capabilities in understanding and processing scientific information.

Automated MCQA Benchmarking at Scale: Evaluating Reasoning Traces as Retrieval Sources for Domain Adaptation of Small Language Models

ConferenceO Gokdemir, N Getty, R Underwood, …

Proceedings of the SC'25 Workshops of the International Conference for High …, 2025

The authors present a scalable, modular framework that automates the creation of multiple-choice question-answering benchmarks from large scientific corpora, demonstrated by generating over 16,000 questions from 22,000 radiation and cancer biology papers. Evaluating small language models on these questions reveals that retrieval-augmented generation using reasoning traces significantly improves performance, enabling several smaller models to outperform GPT-4 on a specialized 2023 exam.

Can LLMs Model the Environmental Impact on SSD?

ConferenceM Akewar, G Quan, S Madireddy, …

Proceedings of the 17th ACM Workshop on Hot Topics in Storage and File …, 2025

Environmental stressors significantly affect SSD performance and reliability, but studying these effects is difficult due to limited data, complex interrelated factors, and the specialized equipment required. Existing storage management techniques neglect environmental impacts, and accurately modeling these effects remains challenging because of varied NAND flash responses and the cascading influence of historical exposure.

Chance-constrained Flow Matching for High-Fidelity Constraint-aware Generation

PreprintJ Liang, Y Sun, A Samaddar, …

arXiv preprint arXiv:2509.25157, 2025

This paper introduces Chance-constrained Flow Matching (CCFM), a training-free method that incorporates stochastic optimization during sampling to enforce hard constraints while preserving high-fidelity generation. CCFM guarantees feasibility comparable to repeated projection but operates on noisy intermediates, reducing distributional distortion and complexity.

Data-Efficient Dimensionality Reduction and Surrogate Modeling of High-Dimensional Stress Fields

JournalA Samaddar, SK Ravi, N Ramachandra, …

Journal of Mechanical Design 147 (3), 031701, 2025

This study presents a convolutional autoencoder framework enhanced by an information bottleneck loss to model tensor datatypes in simulations under limited data conditions, improving robustness by filtering nuisance information while retaining relevant features. The approach is combined with hyperparameter optimization and is numerically compared with dimensionality reduction-based surrogate modeling methods to demonstrate improved predictive performance for engineering applications.

Eaira: Establishing a methodology for evaluating ai models as scientific research assistants

PreprintF Cappello, S Madireddy, R Underwood, …

arXiv preprint arXiv:2502.20309, 2025

This paper presents EAIRA, a comprehensive methodology developed at Argonne National Laboratory for evaluating Large Language Models as scientific research assistants through multiple choice questions, open responses, lab-style experiments, and field-style experiments. These evaluations provide a holistic assessment of LLMs' factual recall, reasoning, problem-solving, and real-world research interactions across diverse scientific domains.

Efficient Flow Matching using Latent Variables

PreprintA Samaddar, Y Sun, V Nilsson, …

arXiv preprint arXiv:2505.04486, 2025

Flow matching models typically overlook the clustering structure in target data, leading to inefficient learning, especially for high-dimensional datasets on low-dimensional manifolds. Latent-CFM addresses this by conditioning on pretrained deep latent features, achieving better generation quality with less training and computation across synthetic, image, and physical spatial field datasets.

Ensembles of Neural Surrogates for Parametric Sensitivity in Ocean Modeling

PreprintY Sun, R Egele, SHK Narayanan, …

arXiv preprint arXiv:2508.16489, 2025

This research improves ocean simulation accuracy by using deep learning surrogates combined with large-scale hyperparameter search and ensemble learning to enhance forward predictions and sensitivity estimations. The ensemble approach also quantifies epistemic uncertainty, increasing the reliability of neural surrogates for parameter tuning and decision making.

Evaluating the Safety and Skill Reasoning of Large Reasoning Models Under Compute Constraints

PreprintA Balaji, L Chen, R Thakur, …

arXiv preprint arXiv:2509.18382, 2025

This work investigates reducing the computational cost of reasoning language models by using reasoning length constraints and model quantization, focusing on their effect on safety performance. It proposes fine-tuning with length controlled policy optimization and quantization to balance computational efficiency with model safety within user-defined compute limits.

Evaluation of Test-Time Compute Constraints on Safety and Skill Large Reasoning Models

ConferenceA Balaji, L Chen, R Thakur, …

Proceedings of the SC'25 Workshops of the International Conference for High …, 2025

This research examines two compute constraint strategies—reasoning length constraint and model quantization—to manage the trade-off between computational efficiency and safety in reasoning language models. It proposes fine-tuning models with a length-controlled policy optimization method and applying quantization to maximize chain-of-thought generation within user-defined compute limits.

Heimdall: Optimizing storage i/o admission with extensive machine learning pipeline

ConferenceDH Kurniawan, RA Putri, P Qin, …

Proceedings of the Twentieth European Conference on Computer Systems, 1109-1125, 2025

This paper presents Heimdall, a machine learning-based I/O admission policy for flash storage that achieves 93% decision accuracy through domain-specific innovations and fine-tuning. Heimdall offers sub-microsecond inference latency, minimal memory overhead, and reduces average I/O latency by 15-35% compared to state-of-the-art methods, supporting various deployment scenarios.

Large Language Models Inference Engines based on Spiking Neural Networks

PreprintA Balaji, S Madireddy, P Balaprakash

arXiv preprint arXiv:2510.00133, 2025

This research addresses the computational challenges of transformer models by exploring spiking neural networks (SNNs) for efficient design. The proposed NeurTransformer methodology combines supervised fine-tuning with existing conversion techniques to optimize transformer-based SNNs for inference, reducing latency caused by high spike time-steps.

LExI: Layer-Adaptive Active Experts for Efficient MoE Model Inference

PreprintK Teja Chitty-Venkata, S Madireddy, M Emani, …

arXiv e-prints, arXiv: 2509.02753, 2025

This work shows that traditional pruning methods for Mixture-of-Experts (MoE) models mainly reduce memory usage without significantly boosting inference speed on GPUs. The authors propose LExI, a data-free technique that adaptively selects the number of active experts per layer based on model weights, leading to improved inference efficiency and performance on language and vision benchmarks.

Multi-diagnostic Time Series Generative model for Prediction of the Edge Localized Modes in Tokamak plasmas

ArticleA Samaddar, Q Gong, S Madireddy, …

DPP 2025, 2025

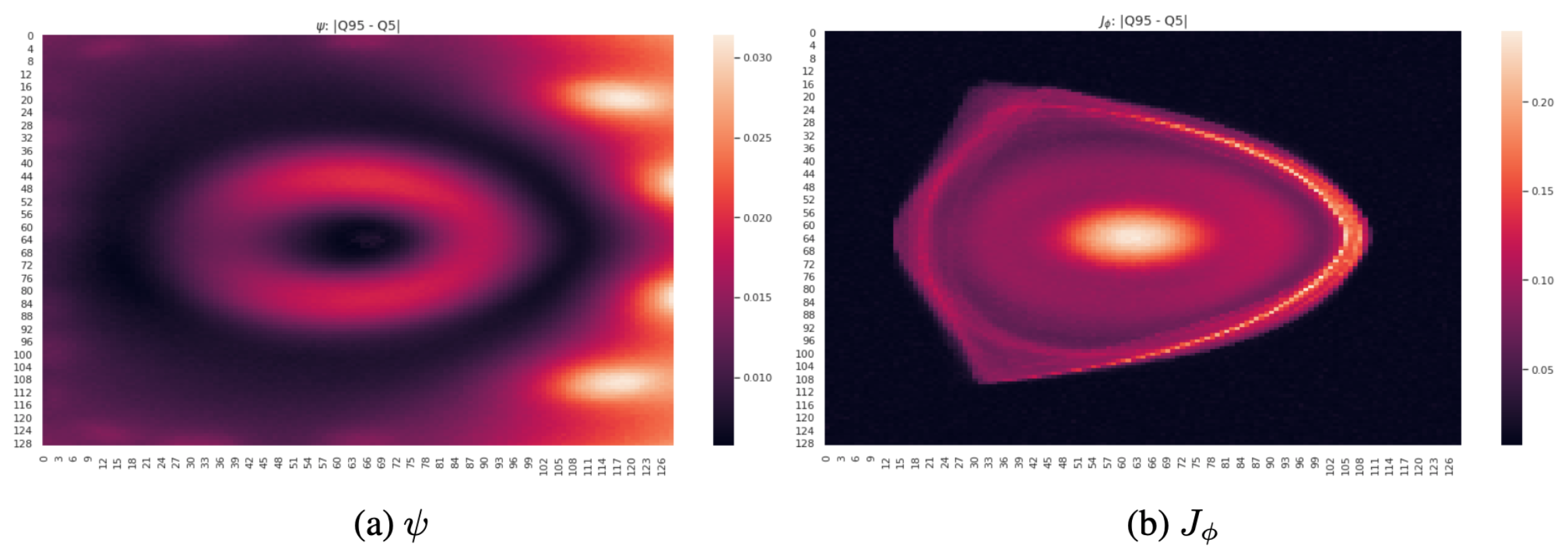

This research addresses edge-localized modes (ELMs), instabilities in high performance tokamak plasmas driven by edge current density and pressure gradients predicted by magnetohydrodynamic peeling-ballooning theory. It introduces a conceptual model for the evolving magnetic separatrix topology in tokamaks with a dominant lower hyperbolic point and discusses the nonlinear dynamics of ELMs governed by this topology.

Talks

Language Model Evaluation and Safety for Scientific Tasks

Argonne Training Program on Extreme-Scale Computing

Half Day Tutorial: Evaluation of AI Model Scientific Skills

Trillion Parameter Consortium's 2025 all-hands conference and exhibition

Multi-diagnostic Generative modeling of Edge-Localized Modes in Tokamak plasma

SciDAC PI Meeting (Poster)

Scalable Flow based Generative Models for Science

SciDAC PI Meeting (Poster)

Half Day Tutorial: Leveraging and Evaluation of LLMs For HPC Research

ISC High Performance Computing Conference